One of the striking things about statistics as a discipline is how new it is. The standard deviation was introduced in 1892. The t-distribution was published in 1908. The term “central tendency” dates from the late 1920s. The randomized controlled trial began development in the 1920s, and the first modern RCT wasn’t published until 1948. The box-plot wasn’t invented until 1970! And complicated, difficult-to-use new inventions usually take a long time before they go mainstream and really affect society.

The scientific method is amazingly young and it continues to advance rapidly. The natural sciences first adopted experimental methods in the 1600s with Galileo Galilei and Sir Isaac Newton being archetypal examples. But if you didn’t know the future, you would NOT have predicted that numerical evidence would become mainstream. Even these two archetypal examples didn’t portend that well for science since Newton was a fervent believer in the occult and alchemy and Galileo was sent to prison for doing science. But the scientific method gradually took over the physical sciences like physics and chemistry and gradually expanded into the medical and social sciences too.

Evidence-based medicine gradually started to become mainstream in the early 1900s. For example, in 1923, The Lancet, a premiere medical journal, asked, “Is the application of the numerical method to the subject-matter of medicine a trivial and time-wasting ingenuity as some hold…?” Today the Lancet mostly relies upon numerical data for evidence. But we still have a long way to go. The term “evidence-based medicine” wasn’t coined until 1990 or 1992 (depending on the claim) and one study found that only about 18% of physicians’ decisions are evidence based. Fortunately, science has been accelerating over the past century:

- Randomized-Controlled Trials (RCTs) first became commonplace in the 1960s and have grown exponentially.

- Laboratory experiments in economics started getting common in the 1950s and field experiments (RCTs) took off in the 1990s, winning the Nobel Prize in 2019.

- “Moneyball” sports analytics took off in the 1990s.

- Evidence-based management becoming a trending topic in the 2000s.

- The effective altruism movement to promote evidence-based philanthropy began to coalesce in about 2011 which is when they first started using the term “effective altruism”.

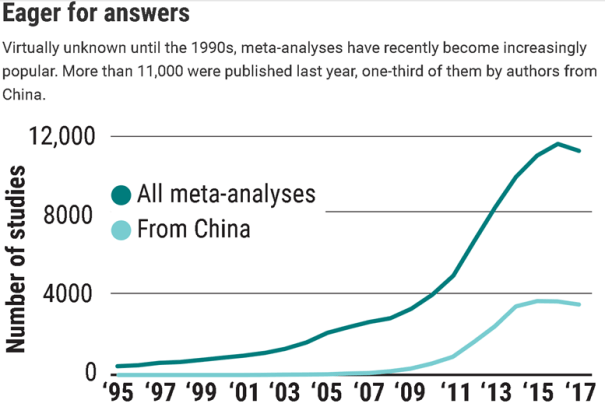

- Meta-analysis was rare before the 1990s and grew exponentially in the 2000s as shown below.

- The replication crisis in the 2010s was the application of meta-science to study scientific methodology beginning in Psychology and it is revolutionizing psychological research. The other social sciences and medicine are grappling with similar problems of flawed research methodology and should lead to similar improvements in knowledge.

In my previous post, I showed some of the glorious story of RCTs, but meta-science proves that RCTs vary widely in quality. As a result, two different RCTs studying the same thing often produce different conclusions. To figure out how to deal with contradictory RCTs, statisticians can combine the results of multiple RCTs into meta-analyses which also weigh each RCT (or observational study) according to its quality:

The term meta-analysis was coined in 1976 by statistician Gene Glass …who described it as “an analysis of analyses.” Glass, who worked in education psychology, had undergone a psychoanalytic treatment and found it to work very well; he was annoyed by critics of psychoanalysis, including Hans Eysenck, a famous psychologist at King’s College London, who Glass said was cherry picking studies to show that psychoanalysis wasn’t effective, whereas behavior therapy was.

At the time, most literature reviews took a narrative approach; a prominent scientist would walk the reader through their selection of available studies and draw conclusions at the end. Glass introduced the concept of a systematic review, in which the literature is scoured using predefined search and selection criteria. Papers that don’t meet those criteria are tossed out; the remaining ones are screened and the key data are extracted. If the process yields enough reasonably similar quantitative data, the reviewer can do the actual meta-analysis, a combined analysis in which the studies’ effect sizes are weighed.

Today, meta-analyses are a growth industry. Their number has shot up from fewer than 1000 in the year 2000 to some 11,000 [in 2017]. The increase was most pronounced in China, which now accounts for about one-third of all meta-analyses. Metaresearcher John Ioannidis of Stanford University in Palo Alto, California, has suggested meta-analyses may be so popular because they can be done with little or no money, are publishable in high-impact journals, and are often cited.

Yet they are less authoritative than they seem, in part because of what methodologists call “many researcher degrees of freedom.” “Scientists have to make several decisions and judgment calls that influence the outcome of a meta-analysis,” says Jos Kleijnen, founder of the company Kleijnen Systematic Reviews in Escrick, U.K. They can include or exclude certain study types, limit the time period, include only English-language publications or peer-reviewed papers, and apply strict or loose study quality criteria, for instance. “All these steps have a certain degree of subjectivity,” Kleijnen says. “Anyone who wants to manipulate has endless possibilities.”

His company analyzed 7212 systematic reviews and concluded that when Cochrane reviews were set aside, only 27% of the meta-analyses had a “low risk of bias.” Among Cochrane reviews [which has the highest reputation], 87% were at low risk of bias…

Money is one potential source of bias… In 2006, for instance, the Nordic Cochrane Centre in Copenhagen compared Cochrane meta-analyses of drug efficacy, which are never funded by the industry, with those produced by other groups. It found that seven industry-funded reviews all had conclusions that recommended the drug without reservations; none of the Cochrane analyses of the same drugs did. Industry-funded systematic reviews also tended to be less transparent. Ioannidis found in a 2016 review that industry-sponsored meta-analyses of antidepressant efficacy almost never mentioned caveats about the drugs in their abstracts.

Unfortunately, the quality of meta-analysis can also vary widely, so you cannot trust a meta-analysis any more than any original research if you do not know about the quality of their methodology. Fortunately, it is easy to find high-quality meta analysis for medical research because The Cochrane Review is the best place for meta-analysis about the state of the art. Sometimes they are a little out of date, but nobody does it better.

Leave a Comment